A log cut into snail shells (public domain image from Simpon Speed)

On Friday 10th December we became aware of an extremely serious security issues in Log4J, a logging component in widespread use by applications written in the Java programming language. The vulnerability has been nicknamed Log4Shell.

What is Log4Shell and Log4J

Log4J is a library to make writing data to a log file easier. It’s highly configurable to make it easy to send the right level of logging data to the right place and it includes bits of intelligence so you can log placeholders and have Log4J fill in the correct value for the environment. So if you’re logging an error in your application and you want to know what version of java is currently running your application you can log:

${java:version}

which will be replaced with the currently running version number of Java.

However, it is very common for log messages to contain user-supplied data. For example, a login form might log the username from a failed login attempt, and many applications don’t check the data the user supplied for magic values like this. So, if I were to attempt to log in with a username of ${java:version} instead of Pete, the logfiles will say:

Failed login attempt for user: "OpenJDK Runtime Environment (build 11.0.11+9-Ubuntu-0ubuntu2.20.04)"

rather than what the application developer expected which would be:

Failed login attempt for user: "${java:version}"

One of the other magic strings uses LightWeight Directory Access Protocol (LDAP) to look up data from a remote server and the remote server can specify additional software to install and run in order to process the answer from the LDAP server.

If an end user can set something that will go to a log file to a magic LDAP string pointing at a server they control they can make the java application request code from that server and make the target system execute code they just supplied. This effectively hands full control over the java application to the person that logged the magic LDAP string. Effectively you can turn a piece of data that is logged into an administrative shell on the target server, hence the name Log4Shell.

The vulnerability is very nasty for a number of reasons. Firstly, it’s a trivial-to-exploit remote code execution vulnerability. You literally send the application a URL to the code you want run and it runs it. Secondly, Log4J is very widely used, including in custom software, and many applications are likely to be vulnerable.

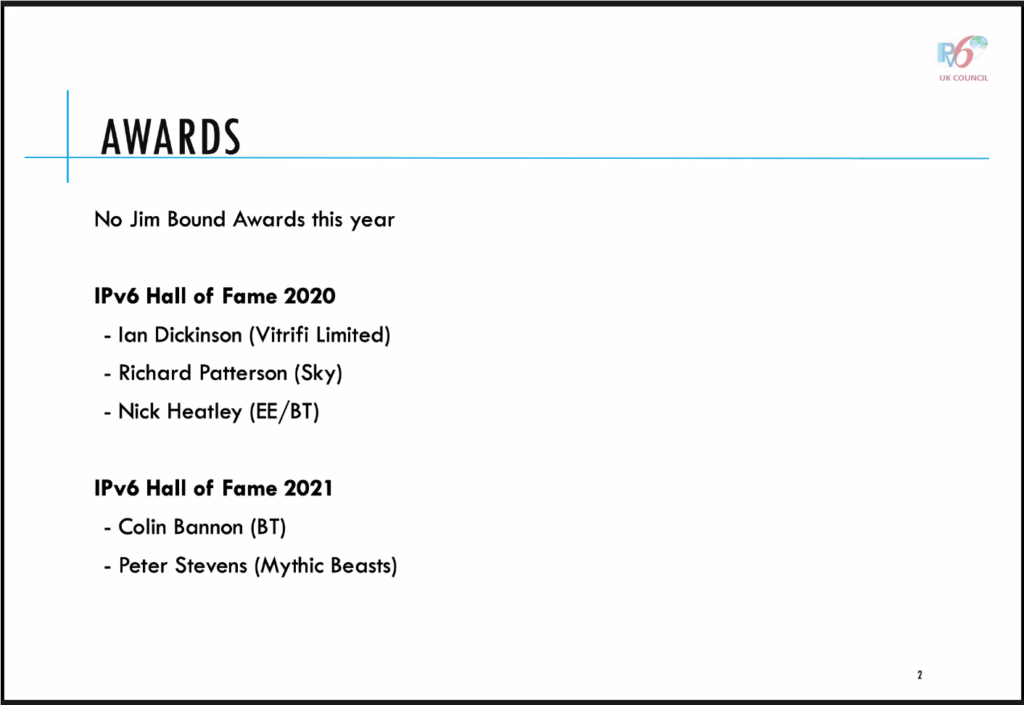

Managed customers

As part of our server management service, we monitor and assess all security advisories for operating system packages, applying serious 0-day vulnerabilities immediately to customer servers.

Unfortunately, Java applications almost never use system-provided libraries, and will instead bundle their dependencies as part of the application. From the point of view of our managed service, updating Java applications with an embedded Log4J is the technically the responsibility of the customer.

However, given the severity and ease of exploit of this vulnerability, we’ve been doing everything we can to help customers who may not even know that they’re reliant on Log4J, let alone where their application is vulnerable.

Going above and beyond

As part of our managed service we install an internally written package called Mythic Reporter. This logs a lot of data from servers every day about what the servers are doing. We then have a centralised process that reads the reports and automates auditing for common issues. With this we can spot things like:

- One of the hardware devices in your storage array is broken or is in a pre-failure state.

- Database replication appears not to be working.

- A filesystems has gone read-only.

- You have mirrored filesystems but not mirrored swap space.

- The cryptographic keys used by ssh that are weak or are blacklisted.

- You have a database running but no backups configured.

- You’re using the stock i40 network module for Debian which is unstable.

- Your server has thermally throttled.

- … and many others.

We can also utilise this dataset for other things. We log the full process list and listening network sockets for every managed server every day. So it’s a small matter of scripting on our reporter server to find the full list of client servers that have a network listening application written in Java. One staff member set about writing a customer notification, one understanding how nasty the security issue was and one building the full list of likely affected customers.

To every managed server customer running a java server process, we sent this email:

We have become aware of a serious security vulnerability in the log4j

logging package for Java. You're receiving this email because our

records show that your managed server is running Java.

At this point, a full list of applications that are affected by this

vulnerability is not available, but given the widespread use of log4j,

the severity of the vulnerability (remote code execution) and the

typical ease of exploitation, we strongly recommend investigating

proactively whether any Java applications that you are using are

vulnerable.

Your Mythic Beasts managed service includes monitoring and upgrading of

operating system packages, but does not cover software installed by

other means. Java applications typically rely on JAR files that are not

provided by system packages, and in this case we are not able to detect

or apply necessary upgrades.

You can find more information on the vulnerability, and the affected

versions of log4j, here:

https://www.lunasec.io/docs/blog/log4j-zero-day/

Whilst we cannot assess whether your server is vulnerable to this

vulnerability, we are happy to provide advice based on the information

that we have.

We detected Java running on the following servers:

-- list of servers --

We then opened tickets in our ticket tracking system for all affected customers so we could close them off once we’re confirmed they were either not vulnerable, or had been patched.

Auditing

We then started auditing the identified customer servers, scanning for installations of the Log4J library and notifying customers as to whether the libraries they have installed are vulnerable or not. We utilised reports from software providers to prioritise fixes. For example Jenkins may be affected depending on the plugins used.

We have worked through the list contacting every customer to confirm if we or they could upgrade the affected component or if we could mitigate through configuration changes, and this afternoon we have been chasing likely affected customers who haven’t responded to encourage them strongly to work with us to fix this issue.

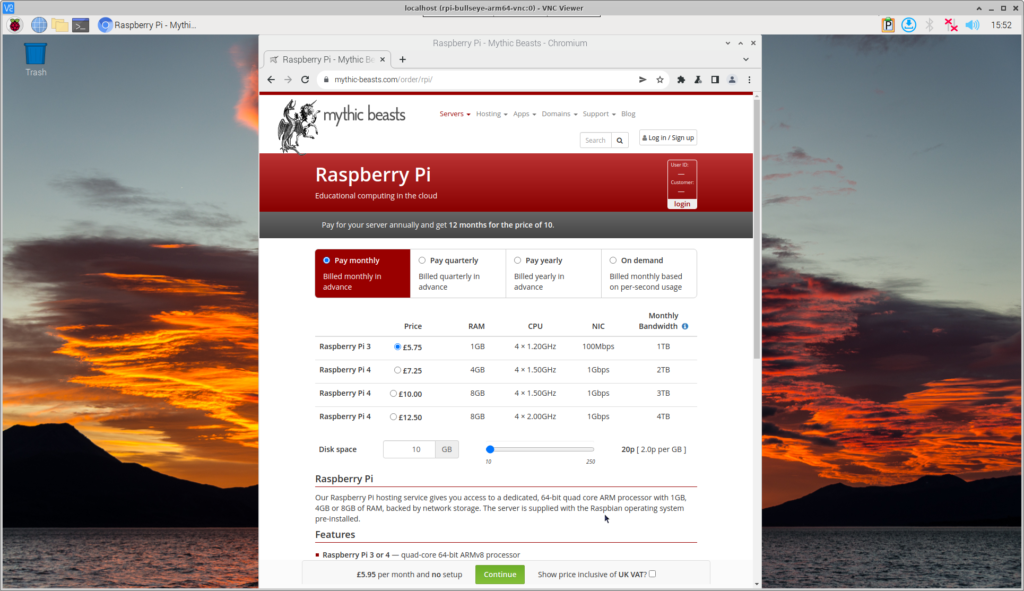

If you run Java-based services and you’re not already a customer of our managed hosting service, then you’ve probably been quite busy over the last few days. If you haven’t been, then you may want to consider signing up.

Dependency management

Log4Shell is a somewhat vicious lesson in dependency management. Every time you import third party code, you need a process for monitoring security advisories for it, and for updating it as required. This is why we have a strong preference for using operating system packages wherever practical, as this delegates the whole problem to the operating system maintainers and makes automatically finding and updating affected libraries trivial. Being able to automatically find vulnerable packages is critical, as you can be guaranteed that when a serious vulnerability is discovered, the bad guys will automate it.

We have signed up to

We have signed up to